This repo is pretty much all in the main master branch, although there remain vestigial branches for IPython notebook versions 2.x and 3.x. Most nbextensions have been updated to work with both Jupyter 4.x and 5.x, but occasionally things get missed, or the Jupyter API changes in a minor version update, so if anything doesn't work as you'd expect/hope, please do check the issues, or open a new one as necessary! IPython/Jupyter version supportįor Jupyter version 4 or 5, use the master branch of the repository. The maturity of the provided extensions varies, so please The IPython-contrib repository is maintained independently by a group of usersĪnd developers and not officially related to the IPython development team.

These extensions are mostly written in Javascript and will be loaded locally in Put everythin in a nice caseįinally we put everything into a nice case, ensure the power suppy and attach everything to a switch using the following parts: The final mini cluster.This repository contains a collection of extensions that add functionality to Su - hduser -c "/opt/apache-hive-2.1.1-bin/bin/hiveserver2 &"īefore exit 0.

Su - hduser -c "/opt/hadoop-2.7.3/sbin/start-dfs.sh &" Su - hduser -c "ipython notebook -profile=pyuser &" Switch to su orangepi user and edit sudo nano /etc/rc.local and instert ThenĪnd in $HADOOP_HOME/etc/hadoop/ we have to in core-site.xml we have to add

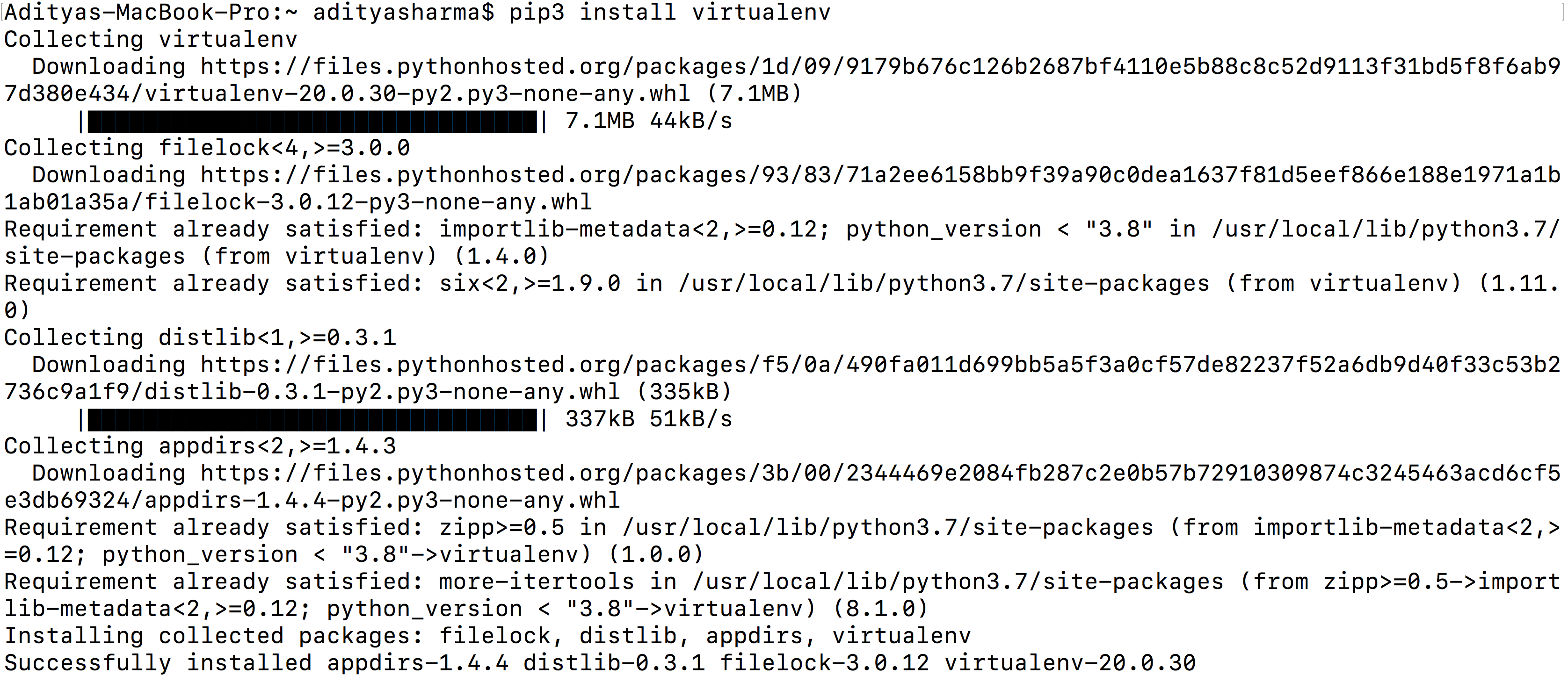

#Install ipython notepad install

To connect Ipyhton and hive as orangepi we fist neeed to install the python package manager p ip with sudo apt-get install python-pip python-dev build-essential. That’s it Hive should now be installed, we can now chekc the installation with Temporary local directory for added resources in the remote file system. Location of Hive run time structured log file Finally we need to make sudo cp hive-default.xml and make some changes such as replacing $ with $HIVE_HOME/iotmp such that it looks like this (hint: use STRG+W to find the locations) In the next step we have to create the metastore with schematool -initSchema -dbType derby. $HADOOP_HOME/bin/hadoop fs -chmod g+w /user/hive/warehouse. $HADOOP_HOME/bin/hadoop fs -chmod g+w /tmp

$HADOOP_HOME/bin/hadoop fs -mkdir /user/hive/warehouse $HADOOP_HOME/bin/hadoop fs -mkdir /user/hive

Finally we need to to create the /tmp folder and a separate Hive folder in HDFS with Got to cd $HIVE_HOME/conf and rename as orangepi user the config file sudo cp hive-env.sh.template hive-env.sh and insert sudo nano hive-env.shthe location of hadoop export HADOOP_HOME=/opt/hadoop-2.7.3. To set the enviroment variables switch to the hduser and open nano ~/.bashrc, then addĮxport HIVE_HOME=/opt/apache-hive-2.1.1-binĮxport PATH=:$HIVE_HOME/bin As usual we extract the file with sudo tar -xvzf hive-2.1.1/apache-hive-2.1. and change the permissions with sudo chown -R hduser:hadoop apache-hive-2.1.1-bin/.

#Install ipython notepad download

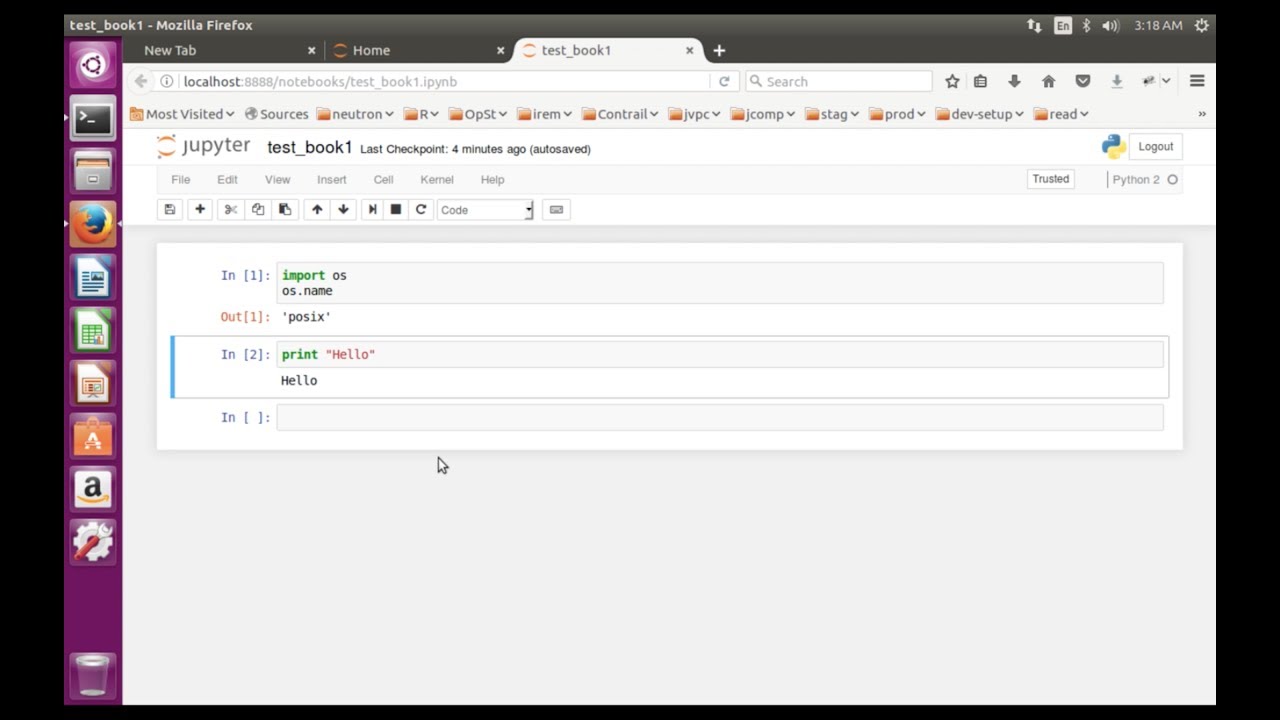

To install Hive we first need to download the package as orangepi user in the /opt/ directory with $sudo wget $ (look here for the latest release). To start the ipython notebook execute ipython notebook -profile=pyuser. To be able to create nice plots we will also install matplotlib via sudo apt-get install python-matplotlib.Switch to the hduser and make a new ipython user with ipython profile create pyuser open the config file nano /home/hduser/.ipython/profile_pyuser/ipython_config.py and addĮstablish the connection to Spark at startup with nano /home/hduser/.ipython/profile_pyuser/startup/00-pyuser-setup.py and add

#Install ipython notepad update

2.1 Install IpythonĪs orangepi user install ipython with sudo apt-get update and sudo apt-get install ipython ipython-notebook. In constrast to Spark or Hadoop it is only required to install the stuff on the mainnode and not at all cluster nodes. In this section we will install some stuff which will make life easier.